Try it on VESSL Hub

Try out the Quickstart example with a single click on VESSL Hub.

See the final code

See the completed YAML file and final code for this example.

What you will do

- Use

vessl.log()to log model’s key metrics to VESSL during training - Define a YAML for batch experiments

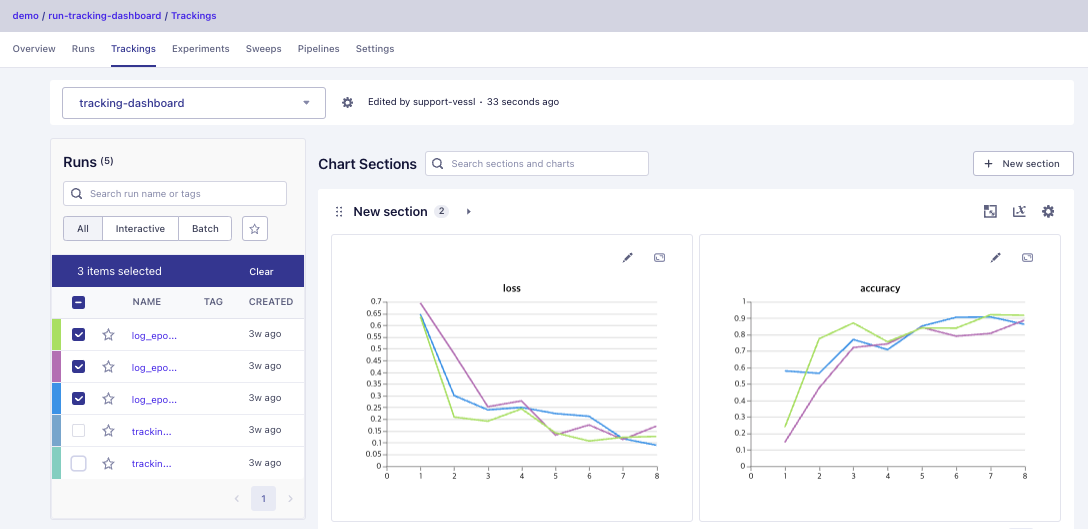

- Use Run Dashboard to track, visualize, and review experiments

Using our Python SDK

You can log metrics like accuracy and loss during each epoch withvessl.log().

For example, the main.py example below calculates the accuracy and loss at each epoch which we receive as environment variables and logs them to VESSL.

vessl.log() with our YAML to (1) launch multiple jobs with different hyperparameters, (2) track the results in realtime, and (3) set up a shared experiment dashboard for your team.

Using the YAML

Here, we have a simplelog_epoch-10_lr-0.01.yaml file that runs the main.py file above on a CPU instance. Refer to our get started guide to learn how you can launch a training job.